January 04, 2011

By Allan Jones

On several occasions, I've worked up a rifle load that shot a nice five-shot group and then loaded up a box or two with that charge weight.

By Allan Jones

Don't discount a "flyer" as fouled data unless you know you pulled the shot. It may have been a real variation. |

Later, I took the ammo to the range and found it was not shooting quite the nice groups that I saw in the original work-up.

Nothing changed. Several times I had even left the bulletseating die in the press so I would be sure that the larger quantity I loaded would be seated just like the test shots. I questioned everything, but none of the usual suspects explained the anomalies.

Advertisement

It took me a long time to snap on the problem. The answer was so simple that it was one of those "bang-hand-on-forehead" moments: sample size.

Making Numbers Work

Statistics is the subdivision of mathematics that provides us tolls to understand numerical data. A big pile of numbers is pretty useless unless you can organize, manage, and study it in a way that helps you accomplish a task--make nuts and bolts, study an infectious disease, or develop reloading data.

Advertisement

I will be the first to admit that statistics has been abused more than a rented Jeep on a prairie dog hunt, often to twist some social data to one's political agenda. I'm not talking about that. I am going to look at how the simplest statistical principles can help you avoid the odd results I experienced.

Everything that gets built needs to conform to standards. If you're building lug nuts for the automotive industry, all those nuts have to fit standard shaft threads, and the outside must fit the hex socket of a wheel tool. With today's modern digital-inspection equipment, it's possible to test every nut for any different parameters and ship with the confidence that every lug nut in the load meets or exceeds specifications.

But let's change the product to something consumable like beer. Fancy digital equipment can tell you that each bottle is unflawed, filled to precisely the right level, and even that the beer's color and temperature are perfect. However, electronics can't tell you that this batch tastes right. That can't be done without someone taking a swig.

In the case of lug nuts, the testing is nondestructive. The beer testing requires some of the product be consumed in testing and is destructive. Ammo, whether you or a big ammo plant loads it, falls into the second category. You can check every round for overall length and visual defects, but even the latest laser-measuring and digital-inspection equipment cannot tell if the ammo meets velocity, pressure, or accuracy standards. For that, you have to pull some triggers and empty some cases.

With ammunition, destructive testing of every item means there is nothing left to ship or, for the hobbyist, nothing left to shoot. So how do we deal with this?

It Takes a Plan

The ammo manufacturer needs a plan for quality testing that destructively tests a sub-amount of a product batch yet gives confidence that the rest of the batch meets standards. Done right, the plan will leave plenty of product left to ship to market and keep stockholders happy. Here's where the power of pure statistics comes into play.

Sample size is a percentage of a batch, or lot, determined by the level of confidence required. The system used by most U.S. companies evolved from military quality standards. For every parameter specified, there is an acceptable quality level (AQL) that expresses the minimum level of a specific defect that can be tolerated. The AQL depends on the parameter, especially for ammunition. Detecting excessive pressure is more important to safety than detecting a cosmetic stain on a cartridge case. The AQL for pressure is set much higher to give the manufacturer a statistical confidence that the entire lot is safe. The AQL establishes how much of the product is consumed in testing.

From here, things get rapidly beyond the scope of this column and publication. When I came from a consumer background to a modern ammo-making facility in 1987, I was blown away by how a modern quality assurance department uses advanced statistics and how much perfectly good ammo is shot up each year to establish that every lot is safe and meets customer needs.

Seeing how the "big guys" do it helped me understand the importance of sample size to the hobby shooter and handloader. Making good decisions about sample size does not require a college degree in math, and it does not impose great limitations on what you do.

Prove It Yourself

If you need convincing of the effect of sample size, a simple test is no farther away than the change in your pocket. Flipping a coin is a classic example of sample-size effects. Assuming the coin does not hit something sticky and stand on its edge, there are only two ways it can fall--heads or tails.

Logically, you know that there is a 50-percent chance of getting one side or the other. But start flipping the coin. If you flip it five times, it's possible for it to come up heads four times. That's 80 percent heads, obviously a spurious representation of the truth. Now do a series of 10 flips, then 15. See what's happening? As you increase the number of flips per sample, the test results approach what your brain knows is the true value: 50 percent. At the point where you feel the results are close enough for your confidence, you have found the sample size required to make you confident in the outcome.

If you're chronographing ammo or shooting groups for accuracy, you seldom know beforehand what the result should be, but sample size is now important for one overpowering reason: The results of a sample must be representative of the total batch. That only happens when sample size is adequate. I've known guys who load 1,000 rounds of varmint ammo, shoot a single five-shot group, and call it accurate. They return from the hunt complaining about missing too many prairie dogs, blaming everything from weather to bad burritos. They are ignoring the real problem. They didn't test enough from the batch to get a true picture of its accuracy.

To a point, the more you shoot, the higher confidence you have in how accurate a batch will be. Yet components are getting expensive, and it's hard to find time to get to the range. What can you do to maximize your confidence without spending all the baby-food money?

While I was on vacation one year, an outdoor writer called for me at Speer and ended up transferred to our quality department. His question was a great one: "What is the least number of shots fired in one group that gives me high confidence that the whole batch is accurate?"

A Ph

D statistician went to work, applying all the classic statistical methods to the questions. Even if I could, I won't attempt to document his work here, but the number he derived was seven. "Doc" was able to demonstrate a jump in statistical confidence level between a five- and a seven-shot group that was remarkable.

The great thing about this is that by firing only two more cartridges for accuracy than we used to (assuming the five-shot group), we have a much better picture of the accuracy potential of that batch of ammo into which we just invested a bunch of time and money. Many of you chronograph at the same time you shoot the groups. The statistics are still the same. You have a better picture of the average velocity of the group in firing seven rounds instead of five.

Understand that this is a good first step, representing the minimum efforts that can yield good if not great results.

Gathering Data

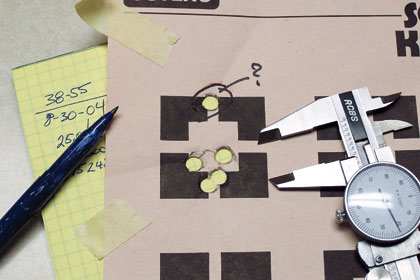

There is one caution I include for accuracy testing, and it concerns the "flyer," that odd bullet hole that looks lonelier than an unwashed gorilla at the senior prom. We have a natural tendency to toss it out as a "fluke," but that can distort your understanding about the accuracy of the batch. If you absolutely know you muffed the shot--your hand slipped, the guy at the next bench dropped his toolbox and made you jump, or the wind flipped the target--it's reasonable to toss that shot from the data set. On the other hand, if you cannot quickly and honestly associate the flyer with some external interference, keep the shot in the data. It could be a real variation and must be included if you value sound decisions.

If you shoot more than one group, or if you have a string of velocity readings of individual shots, you should average the results. Most people know how to do this. In the event your chrono doesn't have that function programmed or it's simply been too long since the seventh grade, here's how: Add up the data numbers and divide by the number of data points. If you have seven velocities for individual shots, add them and divide by seven.

If you have the time and the budget, you can achieve a higher level of confidence by shooting two or more five-shot groups. Averaging the group sizes gives a very representative picture. When doing bullet development testing, I always had the lab fire five, five-shot groups. We averaged the results, carefully watching for any single group of the five that was severely different from the others. There are additional statistical tools that let you determine if such variations in grouped data are significant, but the hobby handloader can usually spot the "wild one" easily.

Then there are those who say, "I never shoot more than two shots at game, so a three-shot group is all I need."

I wish I had a nickel for every time I've heard this. People who say this often fail to appreciate the difference between testing accuracy and adjusting point of aim. Where the bullet lands in true accuracy testing is pretty flexible as long as you can find the group to measure it. Once you find an accurate load, then you can shoot three-shot groups if you must to get the group to where your sights are looking. Realistically, a little more shooting at this point would help, but I try to be practical. This is a better place for smaller shot strings, especially if you're trying to do cool-bore testing. If you really want to be practical, fire a couple of fouling shots downrange before starting point-of-aim testing and then let the barrel cool. The bullets fired "for record" all encounter similar frictional forces going down the bore.

Getting realistic test results are within the scope of anyone smart enough to learn to shoot. Should your inquiring mind wish to apply more stringent statistical methods, there are plenty of books and websites with detailed information on statistics. It's not my purpose in this column to make you a math wiz, but I do want to get you thinking about sample size. Next time you believe a three-shot group will tell you a batch of 500 handloads is accurate, stick your hand in your pocket and remember the coin-flip test.